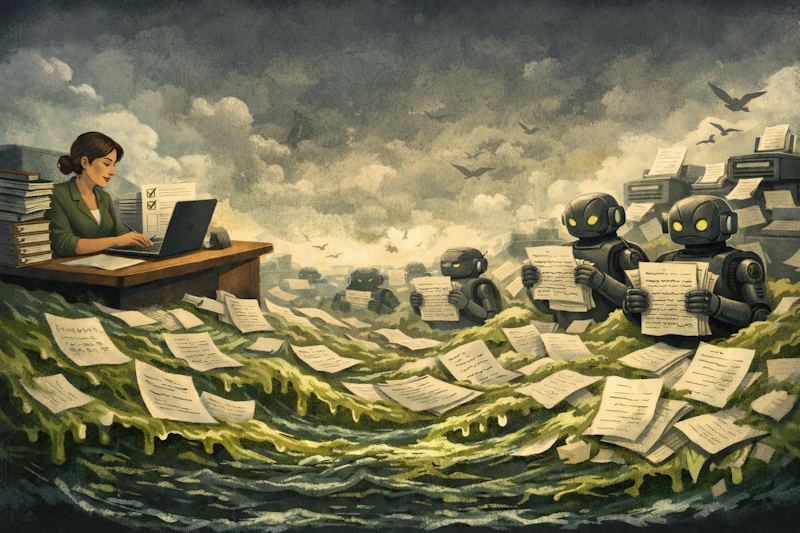

There's a word that's been rattling around the tech world for the past year: SLOP.

It was Merriam-Webster's word of the year for 2025, and it refers to the low-quality, generic, obviously-AI-generated content that's flooding the internet, from social media posts to academic papers to, yes, grant applications. If you work in fundraising, you don't need the dictionary definition. You've already seen it land in your inbox or, worse, your outbox.

What slop looks like in grant writing

Late last year, a grant writing technology company ran an advert on LinkedIn claiming to be hiring a bid writer. The small print read: "Don't apply unless you can sift through a thousand grant programmes within one hour and you can write 70 applications in one day." The story was shared by Dan Sutch at an AI in Grantmaking session convened by IVAR and CAST.

The bid writing community reacted with alarm. It turned out it wasn't a real job advert at all, it was a demonstration of what their technology could do. Search thousands of live opportunities, generate dozens of applications per day. Just throw in another application.

Meanwhile, on the other side of the desk, funders are telling a different story. In the same discussion, grantmakers shared their experiences of what AI-generated applications actually look like. One funder described the problem with painful clarity: people paste the application question into ChatGPT and it comes back with a generic answer that doesn't reflect their practice. When funders export all the responses against a particular question, applicants are sometimes saying the same thing.

Another flagged something subtler, a telltale word. "Whenever I use AI, the word foster always comes up," she said. "And I can see it in application forms and I think, oh no, they've just..." She didn't need to finish the sentence. Everyone knew.

This is what slop looks like in grant writing. It's not just bad grammar or obvious errors. It's generic language that could describe any organisation. It's American spellings in an application to a UK trust, a giveaway that funders are increasingly alert to. It's five different charities all using the same phrasing to answer the same question. It's an application that reads perfectly well on the surface but says absolutely nothing specific about the work you actually do.

Three gut reactions (and why none of them work)

Faced with this, the sector is gravitating towards three responses:

Ignore it and hope it goes away. Keep doing what you've always done. As one charity leader told us: "I feel like my passion comes across and if I used AI, that wouldn't happen." It's an understandable instinct, but for a small charity where one person is writing applications at 9pm after a full day of service delivery, ignoring a potentially transformative tool isn't really an option. Especially when the organisations you're competing with aren't.

Accept the slop and play the numbers game. If AI can generate 70 applications a day, why not try them all? This is the spray-and-pray approach, and it's already causing real damage. Funders are experiencing significantly greater volumes of applications. Some are introducing expression-of-interest stages to cope. Others are tightening criteria or closing application windows earlier. The irony is brutal: the tools designed to make grant writing easier are making the whole system harder for everyone.

Refuse to play the game. Acknowledge that AI is changing the landscape, but decide you won't be part of it. Write fewer, purely human applications and accept you'll probably win less funding as a result. The problem is, this doesn't change the system. The organisations using AI badly will continue to flood funders, while you've voluntarily stepped back. In a funding environment where major funders are pausing programmes and public finances are under severe pressure, that's not a strategy, it's surrender.

None of these responses address the actual problem.

The real question: can you scale quality?

Here's what I think the conversation should actually be about. AI didn't invent slop in grant writing. Anyone who's worked in fundraising has seen rushed, copy-pasted, poorly-tailored applications long before ChatGPT existed. Fundraisers have sent applications they knew weren't their best work because the deadline was tomorrow and there were three more to do that week.

What AI has done is make it trivially easy to produce thoughtless output at scale. And that's a genuine problem. But the answer isn't to reject AI, it's to use it in a way that scales quality alongside quantity.

Instead of producing more work of the same or worse quality, the goal is to use AI to produce better work in less time. Not more applications, but better ones. Not replacing your experience, but giving you the time to apply it where it matters most.

The encouraging thing is that some people in the sector are already thinking this way.

What thoughtful AI use actually looks like

When the National Lottery Community Fund sits down with charities to discuss AI, their published guidance makes a clear distinction. They won't reject an application just because AI was used. But they warn that AI often produces generic content that doesn't capture an organisation's unique skills and the voice of the communities they work with. The message: use it, but make sure the result sounds like you, not like everyone.

A fundraiser we spoke to captured this perfectly: "I still plan to spend the three hours," she said. "It's just that with the same time I spent before, the output is so much better."

And the evidence from the other side of the desk supports this approach. Somerset Community Foundation has been exploring how AI tools can reduce application review time, freeing up their team to build deeper relationships with the communities they serve. Their priority isn't replacing human judgement. It's giving humans more time for the things only humans can do.

The real wins from AI in grant writing aren't about speed. They're about specificity. When AI draws on your organisation's actual documents, your past successful applications, your impact reports, your monitoring data, it produces something that sounds like you, references your real work, and cites your actual numbers. When it draws on the internet, it produces something that sounds like everyone.

There's also a levelling effect that matters enormously. The Charity Digital Skills Report shows that while 76% of charities are now using AI, smaller charities lag behind, and they have the most to gain. There are brilliant examples of organisations who can't afford professional bid writers, or whose teams don't have English as a first language, now producing applications of real quality. AI as an equaliser is powerful and important, but only when the AI is working with your specific knowledge and context, not generating generic platitudes.

The disclosure question is coming

Funders aren't ignoring this shift. BBC Children in Need now addresses AI use directly in its application guidance. The National Lottery Community Fund has published principles for how they will use AI and guidance on how they want applicants to use it. The National Lottery Heritage Fund has followed suit.

This is only going one direction. Within a year, I'd expect most major funders to be asking about AI use as a standard part of the application process. That shouldn't worry you, but it should change how you think about which tools you're using and how.

There's a meaningful difference between saying "I pasted the questions into ChatGPT and submitted what came back" and saying "I used a specialist tool to generate draft responses from our own organisational documents, then reviewed and refined every answer before submission." One suggests laziness. The other suggests a professional operation making intelligent use of available technology.

As one grantmaker put it at the same discussion, perhaps with a wry smile: if we've got charities using AI to write applications and funders using AI to assess them, and we're essentially asking computers to write and mark their own homework, perhaps we should rethink how we're approaching this altogether.

The way forward

The organisations that will do best in this new landscape aren't the ones that write the most applications. They're the ones that use every available tool to make each application count.

That means AI that learns from your organisation's own documents, not the internet. It means applications that reflect your specific work, your real impact data, your authentic voice. It means spending your time where you have the most leverage, refining, tailoring, adding the human context that no machine can provide, rather than starting from a blank page every time. That's what we're trying to build at Hinchilla.

Small and medium charities in the UK spend approximately £900 million per year just on the grant application process. That's time and money that could be going to the communities they serve. The answer isn't to add more slop to the pile. It's to work smarter, write better, and let the quality speak for itself.

The slop is here. The question is what you do about it.

Ready to rise above the slop?

Hinchilla helps you write better grant applications, using your voice, your past documents and with 100% data privacy guarantee.