No, ChatGPT's free and Plus tiers are not GDPR compliant for processing personal data. While you can disable training, free consumer accounts lack the Data Processing Agreements (DPAs) required under GDPR when handling beneficiary information, donor details, or any personally identifiable information (PII). Only Team, Enterprise, or API tiers provide the legal framework charities need for ChatGPT data privacy compliance.

GDPR for charities carries particular weight because of the vulnerable people your organisation serves. A caseworker entering client details into a standard ChatGPT account could constitute a data breach, potentially exposing your charity to ICO enforcement action and significant reputational damage.

This guide breaks down exactly how ChatGPT, Claude, and Gemini handle your data, what the risks are for the charity sector specifically, and which tiers you actually need to stay compliant. We will cover the critical questions: does ChatGPT store your data, does ChatGPT share your data with third parties, and what are your ChatGPT privacy options.

Does ChatGPT Store Your Data?

This is one of the most common questions charity professionals ask, and the answer matters enormously for GDPR compliance. Yes, ChatGPT stores your data on OpenAI's servers. Understanding exactly what gets stored, for how long, and who can access it is essential for any charity handling sensitive information.

What ChatGPT Stores

When you use ChatGPT, OpenAI collects and retains:

- Your conversation content: Every prompt you enter and every response generated

- Account information: Email address, name, payment details (for paid accounts)

- Device and usage data: IP address, browser type, operating system, and interaction patterns

- Metadata: Timestamps, session duration, and feature usage

On Free and Plus accounts, your conversation data is retained indefinitely unless you manually delete it. Even after deletion, OpenAI keeps the data for up to 30 days before permanent removal. This means any beneficiary information, donor details, or safeguarding notes entered into ChatGPT could remain on US servers with no guaranteed deletion date.

The ChatGPT Privacy Policy in Plain English

OpenAI's ChatGPT privacy policy runs to thousands of words, but here is what matters for charities:

- Default training: Your conversations are used to improve future AI models unless you actively opt out

- Human review: OpenAI staff and contractors may read samples of conversations for quality assurance and safety

- Data transfers: Your data is processed in the United States, which has implications for UK GDPR compliance

- No consumer DPAs: Free and Plus tiers do not offer Data Processing Agreements

For charity professionals, the critical point is this: does ChatGPT save your data in a way that creates GDPR risk? The answer is yes. Without a DPA, you have no legal agreement governing how OpenAI processes personal data on your behalf.

Does ChatGPT Store Your Data Even with Training Disabled?

Yes. Many people conflate "training" with "storage," but they are separate concerns. You can disable training on Free and Plus accounts (see instructions below), but this does not delete your data or prevent it from being stored.

With training disabled, your conversations are still:

- Stored on OpenAI's servers

- Subject to 30-day retention after deletion

- Potentially reviewed by humans for safety and quality

- Processed without a DPA

The bottom line: Disabling training makes ChatGPT more private, but it does not make it GDPR compliant for processing personal data. If you need to handle beneficiary information, you need ChatGPT Team, Enterprise, or API with a signed DPA.

Does ChatGPT Share Your Data?

The second critical question for charity data protection is whether ChatGPT shares your data with third parties. Understanding this is essential for trustees fulfilling their governance duties and for completing your data protection impact assessments.

Who Can Access Your ChatGPT Conversations?

OpenAI may share your data with:

- Service providers: Third-party companies that help operate ChatGPT (cloud hosting, analytics, customer support)

- Human reviewers: OpenAI employees and contractors who review conversation samples for safety and quality improvement

- Legal and safety requests: Law enforcement agencies with valid legal process

- Business transfers: If OpenAI is acquired or merges, your data may transfer to new owners

Does ChatGPT Share Your Data with Advertisers?

No, OpenAI does not currently sell your data to advertisers or use it for targeted advertising. However, this does not mean your data is private. The fact that human reviewers can read conversation samples is enough to create a data breach risk for charities handling sensitive information.

The Third-Party Risk for Charities

When a charity staff member enters beneficiary data into ChatGPT, that data could theoretically be read by:

- OpenAI employees in the United States

- Third-party contractors hired for content moderation

- Cloud service providers hosting the infrastructure

None of these third parties have any duty of care to your beneficiaries. They are not bound by your safeguarding policies. They have not signed your charity's confidentiality agreements.

This is why ChatGPT data privacy matters so much for the charity sector. It is not just about GDPR fines. It is about maintaining the trust of vulnerable people who rely on your discretion.

Why GDPR for Charities Carries Extra Weight

Before diving into the technical platform comparisons, it is worth understanding why AI data privacy matters more for charities than commercial organisations.

Beneficiary Vulnerability

Many charities work with vulnerable individuals, including children, people experiencing homelessness, domestic abuse survivors, refugees, and those with mental health conditions. This data often falls under GDPR's "special category" protections, requiring even stricter handling than standard personal data.

Safeguarding Obligations

Beyond GDPR, charities have safeguarding duties. If a staff member or volunteer enters safeguarding-related information into an AI tool that lacks proper data protections, you may have breached multiple regulatory frameworks simultaneously, including your safeguarding policy, data protection law, and potentially your charitable objectives.

Trustee Liability

Charity trustees have a legal duty to ensure the organisation complies with data protection law. The Charity Commission has made clear that ignorance is not an excuse, and trustees can be held personally accountable for serious data breaches. Every trustee board should understand the charity's AI usage policy and the associated risks. For more on trustee responsibilities, see our guide to what trustees need to know about changing charity regulations.

Funder Expectations

Major funders increasingly ask about data handling practices in applications. A data breach or ICO investigation could jeopardise current and future funding relationships. Some funders now specifically ask about AI tool usage in their due diligence processes.

ChatGPT Privacy Settings: How to Disable Training

While disabling training does not make ChatGPT GDPR compliant, it does reduce your risk profile and is a sensible precaution for any charity staff using the free tier for non-sensitive tasks.

Step-by-Step Instructions

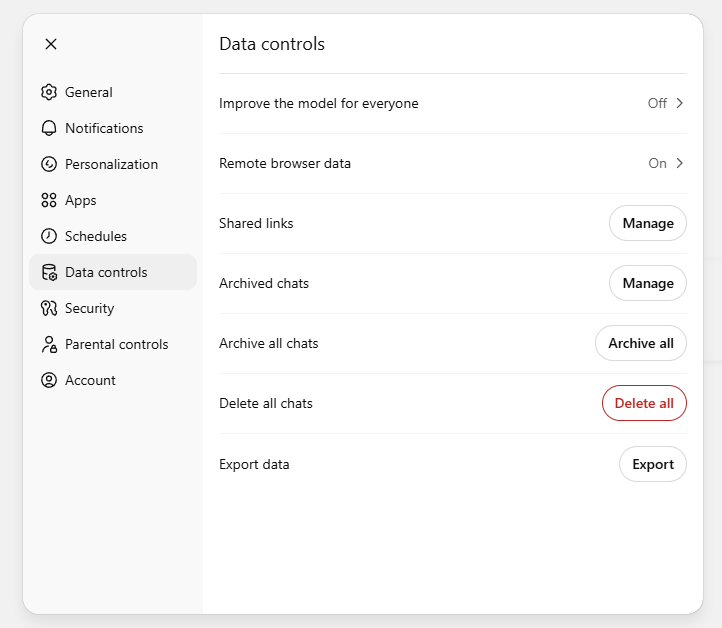

- Log into ChatGPT and click your profile icon in the bottom-left corner, then select Settings

- Go to Data Controls and toggle off "Improve the model for everyone"

This setting persists across sessions, so you only need to do it once.

Alternative: Temporary Chat Mode

For one-off queries where you want extra privacy, use "Temporary Chat" mode:

- Click the model selector dropdown at the top of the chat

- Select "Temporary Chat"

Temporary chats are not saved to your history and are not used for training. However, they are still subject to OpenAI's data retention and safety review policies.

The ChatGPT Plus Trap

A common misconception is that paying for ChatGPT Plus ($20/month) provides better privacy protection. It does not. ChatGPT Plus has the same privacy settings, the same data retention, and the same lack of DPA as the free tier. Paying for Plus gives you faster access and GPT-4, but it does not make you GDPR compliant.

AI Platform Comparison: ChatGPT vs Claude vs Gemini

Different AI platforms have different privacy policies and compliance options. Here is a detailed breakdown for charity professionals.

Skip ahead to the Comparison table if you want a quick overview.

OpenAI (ChatGPT)

Training Policy: By default, OpenAI uses conversations from consumer tiers (Free and Plus) to train future models. Business tiers (Team, Enterprise, API) explicitly exclude your data from training.

Data Retention:

- Consumer tiers: Indefinite retention, 30 days post-deletion

- Business tiers: Configurable, typically 30 days

Human Review: Consumer conversations may be reviewed by human contractors for safety and quality. Enterprise tier eliminates this.

DPA Availability: Only for Team, Enterprise, and API tiers.

Charity Verdict: Use ChatGPT Team ($25-30/user/month) or Enterprise for any work involving personal data. Free and Plus tiers are only suitable for genuinely non-sensitive tasks.

Anthropic (Claude)

Training Policy: Since late September 2025, Claude Free, Pro, and Max accounts operate on an opt-out model, meaning your conversations are used for training by default. Commercial plans (Claude for Work, Team, Enterprise, API) exclude your data from training.

Data Retention:

- Training disabled: 30 days

- Training enabled (default): Up to 5 years

- Business tiers: Configurable

Human Review: Trust and Safety reviews may occur even with training disabled. Business tiers provide stronger protections.

DPA Availability: Only for Team, Enterprise, and API tiers.

Charity Verdict: If using Claude Free/Pro, immediately disable training in Settings > Privacy. For work involving personal data, use Claude Team or Enterprise.

Claude Privacy Settings

To disable training on Claude Free, Pro, or Max accounts:

- Log into claude.ai and click your name or email in the bottom-left corner

- Go to Settings

- Go to the Privacy tab

- Toggle off "Help improve Claude"

With training disabled, your conversations are retained for 30 days instead of up to 5 years. However, Trust and Safety reviews may still occur, and you still lack a DPA for GDPR compliance.

Google (Gemini)

Training Policy: The consumer Gemini web app trains on your prompts by default. Google Workspace with Gemini does not train on your data.

Data Retention:

- Standard: 18 months by default (can be changed to 3 or 36 months)

- Activity disabled: 72 hours minimum

- Human-reviewed data: Up to 3 years even after deletion

- Workspace: Per commercial terms

Human Review: This is critical: Google explicitly states that human reviewers routinely read Gemini web app conversations. This is a hard stop for any sensitive data.

DPA Availability: Only for Google Workspace customers.

Charity Verdict: The routine human review makes consumer Gemini unsuitable for any charity work. Only use Gemini through Google Workspace with a signed Data Processing Amendment.

Gemini Privacy Settings

If you must use consumer Gemini for genuinely non-sensitive tasks:

- Go to myactivity.google.com

- Select Gemini Apps Activity

- Click Turn off (or "Turn off and delete activity")

Note: This removes your ability to save chat history.

AI Platform Comparison Table for Charities

| Feature | ChatGPT Free/Plus | ChatGPT Team | ChatGPT Enterprise | Claude Free/Pro/Max | Claude Team/Enterprise | Gemini (Consumer) | Google Workspace + Gemini |

|---|---|---|---|---|---|---|---|

| Default Training | Yes | No | No | Yes (since Sept 2025) | No | Yes | No |

| Can Disable Training | Yes | N/A | N/A | Yes | N/A | Yes | N/A |

| DPA Available | No | Yes | Yes | No | Yes | No | Yes |

| Data Retention | Indefinite (30 days post-deletion) | 30 days | Configurable | 30 days or 5 years if training enabled | Configurable | 18 months; 3 years for human-reviewed | Per Workspace terms |

| Human Review Possible | Yes | Limited | No | Yes (Trust & Safety) | No | Yes (routine) | No |

| GDPR Compliant for PII | No | Yes | Yes | No | Yes | No | Yes |

| Suitable for Beneficiary Data | No | Yes | Yes | No | Yes | No | Yes |

| Approx. Cost (per user/month) | Free / $20 | $25-30 | Custom | Free / $20 / $100 | $25-30+ | Free | Varies |

100%

Private

Need GDPR-compliant AI for grant writing?

Your data never trains our models

Charity-Specific Scenarios: What Can Go Wrong?

Real-world examples help illustrate the risks. These scenarios are composites based on common charity situations.

Scenario 1: Caseworker Enters Client Details

A caseworker is struggling to write up complex case notes and pastes a client's story into ChatGPT Free to help summarise it. The client's name, address, and details of their domestic abuse situation are now:

- Stored on OpenAI's servers indefinitely

- Potentially used to train future models

- Possibly reviewed by human contractors

This is a data breach. The charity has no DPA with OpenAI, no lawful basis for this data transfer, and no way to guarantee the data will be deleted.

Scenario 2: Grant Application with Beneficiary Stories

A grants officer uses the free Gemini app to polish a funding application that includes anonymised beneficiary case studies. However, the "anonymisation" includes the town name and specific circumstances that could identify individuals.

Risk: Human reviewers at Google will likely read this content, and it could be retained for up to 3 years. Even "anonymised" data can become identifiable when combined with other information.

Scenario 3: Volunteer Using Personal Account

A volunteer uses their personal Claude Pro account to help draft safeguarding policies, including example scenarios based on real incidents (with names changed).

Risk: The charity has no oversight, no DPA, and no control over this data. Since late September 2025, Claude trains on consumer user data by default, meaning this sensitive information could be used to train future models unless the volunteer has actively opted out.

Scenario 4: Trustee Board Paper Preparation

A CEO uses ChatGPT Plus to summarise financial reports and HR matters for a board paper, including staff names and salary information.

Risk: Staff PII is now on OpenAI's servers without a DPA. This could be a breach of staff trust and GDPR simultaneously.

Scenario 5: Donor Communication

A fundraising officer pastes a donor complaint containing personal details into ChatGPT to help draft a response.

Risk: Donor data could be retained indefinitely and potentially reviewed by OpenAI staff. You should never put donor information into a standard ChatGPT consumer account.

Worried about data privacy with AI?

Hinchilla guarantees 100% GDPR compliance for your grant applications. Your data is never used to train AI models. Full DPA included.

What Should Your Charity Do?

If You Have No Budget for Paid AI Tools

- Create a clear AI usage policy that prohibits entering any personal data, beneficiary information, or sensitive organisational data into free AI tools

- Train staff and volunteers on what counts as PII and why they cannot use AI for certain tasks

- Use AI only for genuinely non-sensitive tasks: drafting generic social media posts, brainstorming event ideas, explaining concepts, or improving writing that contains no personal information

- Document your policy and ensure it is part of your data protection and IT policies

If You Can Budget for Paid Tiers

- Assess which platform best fits your needs based on the comparison table above

- Start with ChatGPT Team or Claude Team as entry-level compliant options (around $25-30 per user per month)

- Set up organisational accounts rather than relying on individual subscriptions, so you maintain oversight and control

- Ensure you sign the DPA offered by your chosen provider

- Configure data retention settings according to your data protection policy

Regardless of Budget

- Add AI tools to your data protection impact assessment (DPIA) if you plan to use them for any tasks involving personal data

- Update your privacy notices if AI tools will process personal data in ways individuals should know about

- Brief your trustees on AI usage and associated risks, so they can fulfil their oversight duties

- Create an incident response plan for AI-related data breaches

Staff and Volunteer Training Essentials

Any charity using AI tools, even free ones for non-sensitive tasks, should train staff and volunteers on:

What Counts as Personal Data

Names, addresses, email addresses, phone numbers, but also anything that could identify someone when combined with other information (job title + organisation, specific circumstances + location, date of birth + postcode, etc.).

What Counts as Sensitive/Special Category Data

Health information, religious beliefs, ethnic origin, political opinions, sexual orientation, trade union membership, genetic or biometric data, and criminal records. This data requires extra protection under GDPR.

The Difference Between "Training Disabled" and "Private"

Make clear that disabling training does not make free tools safe for sensitive data. The data is still stored, still potentially reviewed by humans, and still processed without a DPA.

How to Check ChatGPT Privacy Settings

Walk through how to verify training is disabled on any personal accounts being used for non-sensitive charity work. Show staff where to find Settings > Data Controls.

What to Do If They Make a Mistake

If someone accidentally enters sensitive data into a free AI tool, they should report it immediately as a potential data breach. Your charity can then assess the risk, determine whether ICO notification is required, and take appropriate action.

Approved Tools and Use Cases

Be specific about which AI tools are approved, for which purposes, and who can use them. A vague policy leads to vague compliance.

Frequently Asked Questions

Does ChatGPT store your data?

Yes. ChatGPT stores your conversations on OpenAI's servers. On Free and Plus accounts, this data is retained indefinitely unless you manually delete it, and even then it takes up to 30 days to be permanently removed. OpenAI may also use your conversations to train future models (unless you disable this) and human reviewers may read samples for quality assurance. For charities, this means any beneficiary information entered into ChatGPT could be stored on US servers with no guaranteed deletion date.

Does ChatGPT share your data?

OpenAI shares data with service providers, human reviewers, and may disclose data for legal compliance. They do not sell data to advertisers. However, the human review element means your conversations could be read by OpenAI employees or contractors, which creates a data breach risk for sensitive charity information.

Does ChatGPT save your data if you use Temporary Chat?

Temporary chats are not saved to your history and are not used for training. However, they are still subject to OpenAI's standard data retention (up to 30 days) and safety review policies. Temporary Chat is more private but not completely private.

Is ChatGPT GDPR compliant for UK charities?

ChatGPT Free and Plus tiers are not GDPR compliant for processing personal data. They lack Data Processing Agreements, have indefinite data retention, and allow human review of conversations. Only ChatGPT Team, Enterprise, or API tiers with signed DPAs are suitable for handling beneficiary, donor, or staff personal data.

Can charities use ChatGPT under GDPR?

Yes, but with strict limitations. Charities can use free ChatGPT for tasks that involve no personal data whatsoever, such as brainstorming ideas or improving generic text. For any task involving personal data, charities must use business tiers (Team/Enterprise) with appropriate DPAs in place.

Does ChatGPT train on my data if I pay for Plus?

Yes. ChatGPT Plus ($20/month) trains on your data by default, just like the free tier. You can disable this in settings, but you still lack a DPA and your data is still retained and potentially reviewed. Paying for Plus does not make you GDPR compliant.

What happens if a volunteer enters beneficiary data into ChatGPT?

This could constitute a data breach under GDPR. The charity would need to assess the risk, potentially notify the ICO within 72 hours, and consider whether affected individuals need to be informed. The charity should also review its policies and training to prevent recurrence.

Which AI platform is safest for charities handling sensitive data?

For charities needing to process personal data with AI, the safest options are ChatGPT Team/Enterprise, Claude Team/Enterprise, or Google Workspace with Gemini. All three offer DPAs, have no default training on your data, and provide administrative controls. The specific choice depends on your existing tech stack and budget.

Can we use AI for grant applications that mention beneficiaries?

Only if you are using a GDPR-compliant business tier with a DPA. Even then, you should minimise personal data, use genuine anonymisation where possible, and ensure your data protection policy covers this use case. For free AI tools, do not include any identifiable beneficiary information.

Do we need to add AI tools to our privacy notice?

If you are using AI tools to process personal data in ways that individuals would not reasonably expect, yes. If you are only using AI for internal non-personal tasks, it is less likely to be necessary, but you should document your decision-making.

What should be in our charity's AI usage policy?

At minimum: approved tools and tiers, prohibited uses (especially regarding personal data), training requirements, incident reporting procedures, and regular review dates. The policy should align with your existing data protection policy and be approved by trustees.

Summary: Key Takeaways for Charity Leaders

- ChatGPT Free and Plus are not GDPR compliant for processing personal data. Neither are Claude Free/Pro/Max or consumer Gemini.

- Does ChatGPT store your data? Yes. Indefinitely, with human review possible.

- Does ChatGPT share your data? Yes. With service providers and human reviewers.

- Disabling training helps but is not enough. You still lack a DPA and your data is still stored and potentially reviewed.

- Business tiers are required for personal data. ChatGPT Team, Claude Team, or Google Workspace with Gemini are the minimum for GDPR compliance.

- Train your staff and volunteers. Clear policies and training are essential even if you only use free tools for non-sensitive tasks.

- Brief your trustees. They have personal liability for data protection compliance.

References and Documentation

- OpenAI Privacy Policy and Enterprise Privacy: Details the default training on Free/Plus accounts, the 30-day deletion delay, retention policies, and the DPA availability exclusively for Team/Enterprise/API tiers. (OpenAI Privacy Policy | Enterprise Privacy)

- Anthropic Privacy Center and Policy: Outlines the late September 2025 shift to opt-out training for consumer accounts (training on by default), the 30-day retention policy for those who opt out (5 years if training enabled), and the strict exclusion of commercial/API data from training. (Anthropic Privacy Center)

- Google Gemini Apps Privacy Hub and Workspace Terms: Details the standard 18-month retention, the use of human reviewers for consumer Gemini App conversations, the 72-hour safety retention when activity is disabled, the 3-year retention for human-reviewed data, and the separate, protected terms for Google Workspace users. (Gemini Apps Privacy Notice)

- ICO Guidance on AI and Data Protection: The Information Commissioner's Office guidance on using AI tools in compliance with UK GDPR. (ICO AI Guidance)

- Charity Commission Guidance: Trustee duties including data protection compliance. (Charity Commission)